Responsible AI

AI RiskRisk Management

Ensuring Responsible and Trustworthy AI

Artificial Intelligence (AI) presents significant opportunities for growth and innovation but also introduces unique risks such as algorithmic bias, limited explainability, privacy issues, and cybersecurity threats. Effective AI risk management—aligned with established frameworks like the NIST AI Risk Management Framework, OECD AI Principles, EU AI Act, and ISO 42001—enables organizations to proactively identify, assess, and mitigate these risks. Implementing robust AI governance not only fosters trust and regulatory compliance but also ensures responsible, secure, and ethical utilization of AI technologies.

Responsible AI: Building Trustworthy AI Systems That Align with Your Values

AI Risk Management is not just a framework—it’s a vital, forward-looking strategy that integrates technical precision, organizational resilience, and ethical vigilance to mitigate potential harms while amplifying the transformative benefits of artificial intelligence. In an era where AI is reshaping industries, robust risk management ensures innovation thrives within safe and responsible boundaries.

The key themes underpinning AI Risk Management:

- Framework applicable: Navigating the intricate web of standards and regulations is critical. Identifying and aligning with the right frameworks ensures compliance and strengthens trust with stakeholders.

- Risk Identification and Assessment: Dive deep into the potential vulnerabilities of AI systems. From detecting biases in algorithms to assessing ethical dilemmas and ensuring reliability, this proactive approach safeguards against unforeseen challenges and reputational risks.

- Risk Mitigation: Implement smart, scalable controls that address risk head-on. These include embedding safeguards into system designs, adopting cutting-edge technologies, and setting up rigorous protocols to ensure risk remains at acceptable levels.

- Governance and Oversight: Empower decision-making with clarity and accountability. Clear roles, responsibilities, and robust oversight structures provide the foundation for ethical AI development and deployment, fostering confidence among teams and external stakeholders alike.

- Continuous Monitoring and Evaluation: AI systems evolve—and so do their risks. Regular evaluations enable institutions to anticipate new challenges, adapt to evolving landscapes, and continually enhance the effectiveness of their risk management strategies.

By weaving these pillars into their operations, organizations not only protect themselves from potential pitfalls but also position AI as a trusted driver of progress. In the rapidly evolving AI landscape, managing risks isn’t just an obligation—it’s an opportunity to lead responsibly and innovate boldly.

The core principles of AI Risk Management are reflected in various influential standards and guidelines including:

T3 Head of Responsible AI - Jen Gennai is a leading voice and pioneer in AI Risk Management, Responsible AI, and AI Ethics.

NIST Risk Management Framework: Promotes a structured approach to identifying, assessing, and mitigating AI-related risks across the entirety of the AI lifecycle.

ITI AI Futures Initiative: Emphasizes the importance of risk-based frameworks that are adaptable, promote innovation, and ensure responsible AI development.

OECD AI Principles: Provides a foundation for ethical AI by focusing on fairness, accountability, transparency, and human-centeredness.

ISO/IEC AI for SC 42: Focuses on technical standards for trustworthy AI, aiming to improve safety, security, and reliability.

Our resources about

AI RISK MANAGEMENT

AI Risk Frameworks: Aligning AI with Your Enterprise Risk Management

As AI adoption accelerates, 2025 will see critical shifts in the regulatory and policy landscape. Here are key developments and trends to monitor:

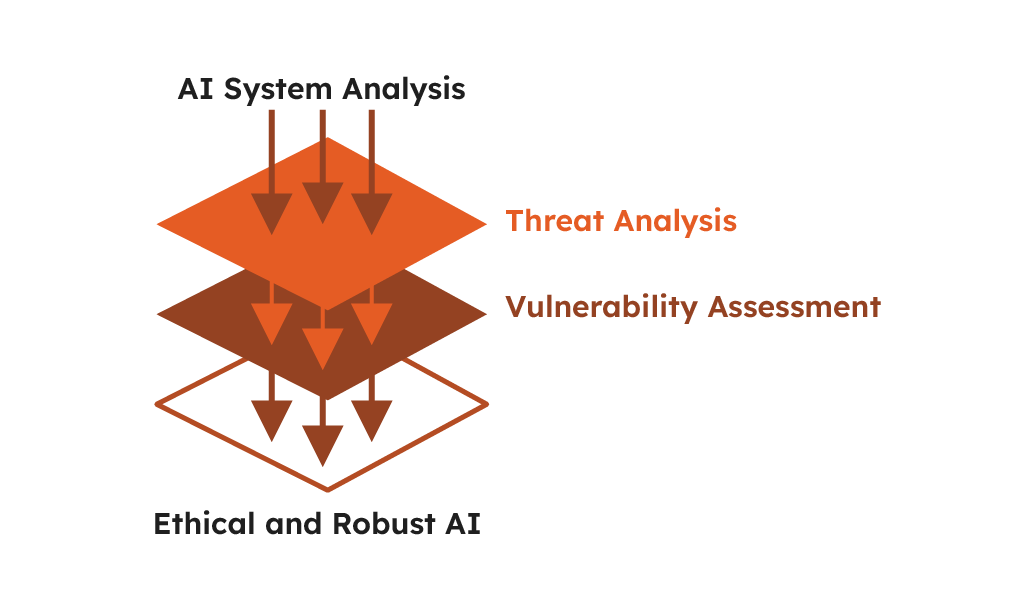

1. Risk Identification

Threat Analysis Phase:

During this phase, the threats to AI security, integrity and ethical behavior are identified. The main threats are:

- Data Poisoning: The training data is altered by bad actors so that the model operates in a biased or degraded manner

- Adversarial Attacks: Attackers exploit model vulnerabilities by injecting inputs that cause wrong outputs (e.g. misclassification in image recognition)

- Model Inversion & Extraction Attacks: Unauthorized access to AI models can reverse-engineer sensitive training data and replicate proprietary algorithms

- Bias & Discrimination: Systematic bias in AI outputs due to flawed or unrepresentative training data

- Regulatory Non-Compliance: Failure to meet regulations (e.g. GDPR, EU AI Act, sector-specific regulations)

Vulnerability Assessment Phase

Proactively preventing failures through identifying the AI system’s weaknesses:

- Algorithmic Vulnerabilities: Model design, training or interpretability flaws that lead to errors or adverse outcomes

- Data Integrity Risks: Incorrect or inconsistent input data leading to harm from AI sub performance

- Privacy Protections: Unauthorized access to PII / confidential data

- Model Robustness: Adversarial perturbations and unforeseen edge cases resistance

- Impact Analysis Phase

Understanding AI failures implications to prioritize risk mitigation:

- Operational Disruption: Downtime, bad decisions, security holes in high risk applications (healthcare, finance, autonomous systems)

- Financial Loss: Liability for incorrect AI outputs, legal costs, fines

- Reputational Damage: Consumer trust lost due to AI failures or ethical concerns

- Regulatory Consequences: Sanctions, fines, lawsuits for non-compliance to AI governance frameworks

Examples:

Threat Analysis: IBM’s AI Fairness 360 toolkit is an example of an approach to identify potential biases in AI models. By identifying biases early in the development process, developers can implement mitigation strategies that lead to more ethical and fair AI deployments.

Vulnerability Assessment: Google’s AI Incident Database is an innovative resource that aggregates reports of AI failures. By analyzing these incidents, developers can identify common vulnerabilities within their AI systems, potentially preventing similar failures.

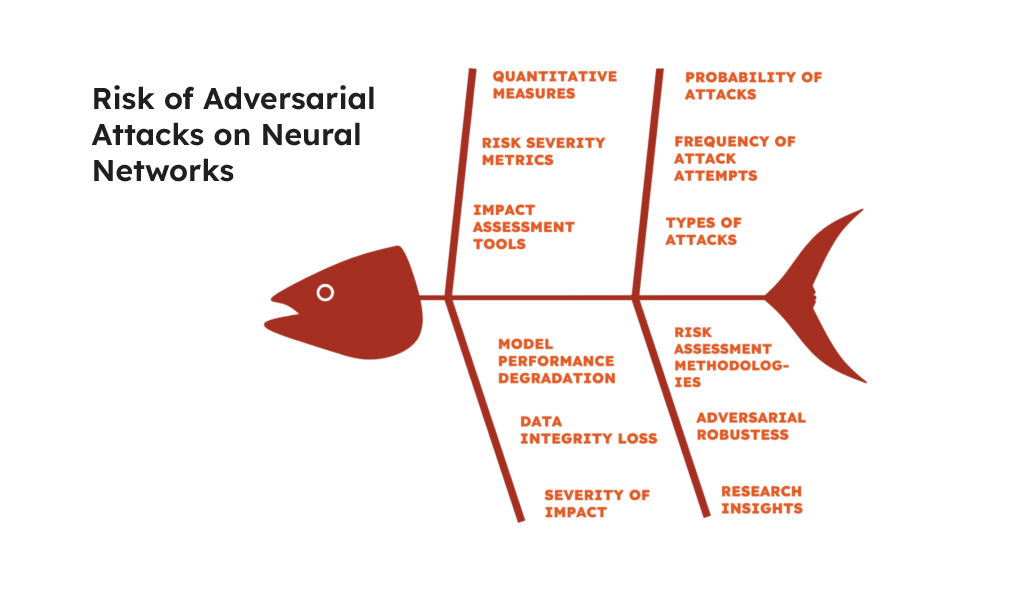

2. Risk Assessment

In order to effectively assess AI risks, the entities should:

- Establish interdisciplinary teams (so-called AI Red Teams) to stress test and challenge AI systems on a regular basis.

- Leverage structured frameworks and standardized templates to perform deep and consistent risk assessments.

- Deploy automated tools and techniques for explainability to identify, in a systematic way, weaknesses and ethical concerns.

- Regularly update and refine processes based on prevailing market solutions and new risks.

- This will lead to a well-rounded, thorough and forward-looking approach to the management of AI risk.

Latest Research:

Probability and Severity Evaluation: Research by MIT on adversarial robustness in neural networks assesses the likelihood and impact of adversarial attacks. This research provides quantitative measures to gauge risk severity and helps organizations prioritize risks based on empirical data.

Risk Identification

Infrastructure inc.

Data & Networks

- Security & Privacy

- Cyberattacks, adversarial exploits, infrastructure vulnerabilities

Model Architecture

- Bias & Fairness

- Explainability & Transparency

- Adversarial Attacks

Data & Training

- Data Bias & Quality

- IP & Compliance

Large Language Model (LLM)

- Misinformation

- Manipulation

- Ethical Concerns

Deployment & API

- Security Gaps

- Model Drift

Application & Compliance

- Misinformation

- Regulatory Violations

- Job Displacement

Governance &

Accountability

- Deepfakes & Misinformation

- Weak AI Governance

- Trust & Reputation

- Legal Liability

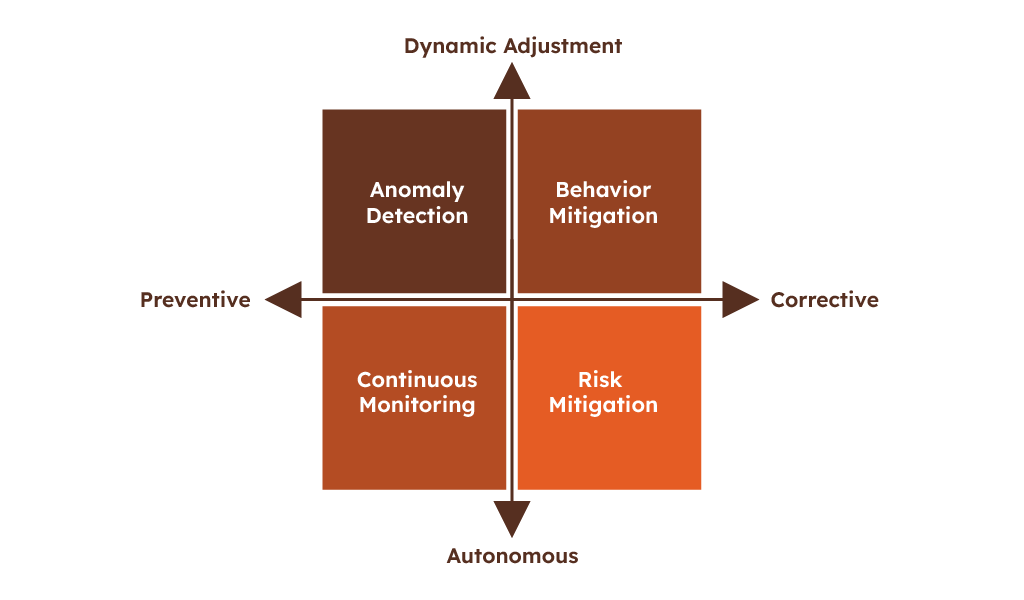

3. Risk Control

Regularly engage cross-functional committees or boards to oversee AI

- Data Integrity and Quality Controls

- Implement rigorous data validation, verification and cleansing processes

- Validate data sets are accurate, representative, unbiased and suitably secured

- Model Explainability and Transparency

- Adopt explainable AI methodologies (e.g., SHAP, LIME) to improve understanding and trust

- Institutionalize model interpretability in AI development

- Adversarial Testing and AI Security Assessments

- Carry out AI Red Teaming, penetration testing and vulnerability assessments

- Leverage automated security tools (e.g., Microsoft Counterfit, Google’s SAIF)

- Privacy-by-Design

- Leverage privacy-preserving methods, like differential privacy and federated learning

- Actively align AI initiatives with GDPR and other privacy standards

- Continuous Monitoring and Early Warning Systems

- Integrate real-time monitoring and automated anomaly and deviation detection

- Set thresholds and alerts for suspected performance deterioration or malicious interference

Corrective (Reactive) Controls:

- Incident Response and Recovery Plans

- Establish methodical incident response procedures for AI incidents or security breaches

- Regularly test response plans to minimize recovery time and impact

- Human-in-the-Loop Interventions

- Apply human intervention to override, correct or intercept AI decisions under crisis

- Institute escalation protocols with predefined triggers for human participation

- Model Retraining and Updating

- Define the process to immediately retrain or refresh models upon the identification of a risk

- Use incidents to reinforce future model robustness

- Communication and Transparency

- Issue clear, timely, and transparent communication following an incident to preserve stakeholder confidence

- Utilize incidents to enhance internal appreciation of risk and to prevent reoccurrence

- Audit Trails and Accountability Logs

- Keep thorough audit logs for accountability, incident investigation, and corrective actions

- Conduct retrospectives to expose root causes and systemic weaknesses

Innovation:

Preventive Measures: Autonomous monitoring systems that continuously scan for anomalies in AI behavior exemplify preventive measures. These systems help prevent risks from materializing by providing real-time alerts that enable immediate intervention.

Corrective Actions: DeepMind’s safety-critical AI initiatives involve creating AI systems capable of adjusting their behavior dynamically to mitigate risks when detected. This approach is particularly crucial in environments where AI decisions can have significant consequences.

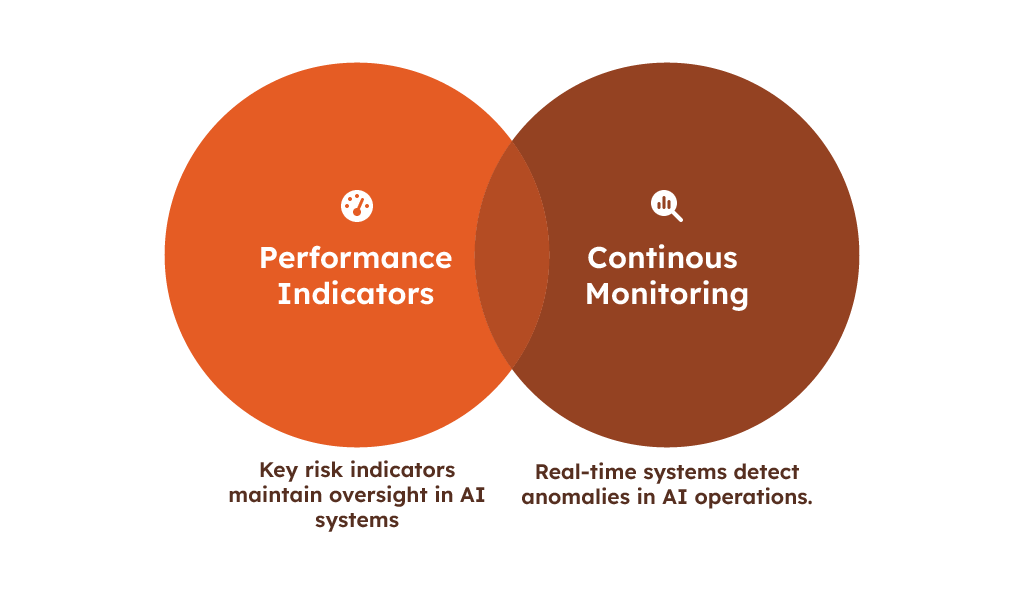

4. Risk Monitoring and Reporting

Continuous monitoring and reporting allow for the ongoing management and adjustment to evolving AI risks. Core activities include:

1. Continuous Risk Monitoring:

- Utilize real-time analytics and anomaly detection.

- Track and monitor model drift, performance degradation, new exposures.

2. Automated AI Risk Dashboards:

- Automate risk status, model performance, and regulatory compliance metrics dashboard.

- Utilize platforms (Google SAIF, Microsoft Counterfit, etc) to automate the risk visualization.

3. Standard Reporting Protocols:

- Regular (monthly / quarterly) risk and compliance reviews.

- Document outcomes, incident outcomes, and risk treatment effectiveness.

4. Incident and Anomaly Alerts:

- Set up automatic alerts to react in real-time to critical situations.

- Ensure escalation paths are built in to the system so that human intervention is ensured if necessary.

5. Audit Trails and Accountability Logs:

- Record data manipulations, model outputs and system actions.

- Support forensic investigations and compliance reporting.

6. Feedback loops and continual improvement:

- Re-assess the risk once new monitoring indicates an incident or an incident report is received.

- Continually refine risk treatments and management practices.

Examples:

Continuous Monitoring: The development of systems for real-time anomaly detection in AI operations is crucial for ongoing risk monitoring. These systems enable organizations to respond swiftly to unexpected changes or failures in AI behavior.

Performance Indicators: Implementing key risk indicators (KRIs) in AI systems helps maintain oversight by triggering alerts when risk thresholds are exceeded.

5. Governance and Compliance

Effective governance and compliance of AI systems needs well-understood accountabilities, well defined oversight structures and compliance with emerging regulations such as the EU AI Act, UK ICO and CMA guidance, and sector specific regulations such as ESMA standards. Ethical behaviour should be integrated using guidelines such as the OECD AI Principles and ISO/IEC 42001, with frequent internal and external audits, clear reporting of AI risks and incidents, and ongoing employee training to enforce ethical and regulatory compliance in the delivery of responsible and trustworthy AI globally.

1. AI Bill Reintroduced to the House of Lords (UK): Lord Holmes of Richmond has reintroduced the Artificial Intelligence (Regulation) Bill (as a Private Members Bill) in the House of Lords. The bill seeks to create a regulatory framework for AI in the UK.

2. The third draft of the General-Purpose AI Code of Practice released by the European Commission which provides Compliance criteria for general-purpose AI systems per the EU AI Act.

3. Common Framework for AI Incident Reporting by OECD: Organisation for Economic Co-operation and Development (OECD) introduced a framework for uniform reporting of AI incidents to improve transparency and accountability in the use of AI.

4. European Commission’s Model Contractual Clauses for Non-High-Risk AI Systems Procurement: European Commission announced model contractual clauses for sourcing of non-high-risk AI systems to meet the requirements of prevailing laws and to encourage the adoption of the best practices in AI.

5. Japan New AI Law: Government of Japan has pass the new AI bill to control the usage and development of artificial intelligence which is primarily based on the ethics and societal morals.

Latest Research:

Policy Development and Oversight: The burgeoning field of Regulatory Technology (RegTech) uses AI to streamline compliance with evolving regulations. These AI-driven solutions help organizations remain agile in dynamic regulatory environments, demonstrating how AI can facilitate governance and compliance.

6. Stakeholder Engagement and Training

Successfully managing AI risks demands a dialogue with stakeholders – from executives to employees, customers to regulators – to raise awareness and establish accountability. Regular training should articulate the AI risks, ethical considerations, and compliance duties in plain language. Conduct continuing conversations using workshops, scenario planning exercises, and feedback mechanisms. This will allow stakeholders to comprehend their role in controlling AI risks and advancing the cause of responsible AI, reinforcing operational resiliency, regulatory adherence, and stakeholder confidence.

Innovation:

Internal Training and Public Engagement: Research into explainable AI (XAI) aims to make AI systems more transparent and understandable, enhancing stakeholder engagement by elucidating AI decision-making processes.

7. Review and Revision

Regularly reviewing and revising the risk management framework ensures that it remains effective and relevant in managing the risks of evolving AI technologies.

Examples:

Feedback Loops and Periodic Review: The continuous updating of tools like IBM’s AI Fairness 360 toolkit exemplifies the importance of adaptive risk management practices that evolve in response to new insights and changing conditions.

Implementation and Continuous Evolution

Implementing this comprehensive AI risk management framework requires not only adherence to technical standards but also a commitment to continuous evaluation and adaptation as AI technologies advance. Each component of the framework is crucial for ensuring that AI systems are secure, reliable, and aligned with ethical and regulatory standards. As AI continues to evolve, so too must the strategies and tools used to manage its associated risks, requiring ongoing education and adaptation from all stakeholders involved.

Who does it impact?

Asset Managers

Banks

Supervisors

Commodity Houses

Fintechs

Examples of what we do at T3T3

Supporting a Bank in enhancing AI Literacy

CHALLENGE: Banks are increasingly deploying AI-driven solutions to enhance operational efficiency, customer experience, and risk management. However, many financial institutions face challenges in cultivating AI literacy

OBJECTIVES

- Align AI competencies with EU AI Act requirements and industry benchmarks

- Strengthen staff skills to minimise regulatory, reputational, and operational risks

APPROACH

- Assess current literacy levels across teams

- Define clear, tailored learning objectives

- Develop e-learning modules and interactive workshops

- Consult external experts for targeted insights

- Establish continuous feedback loops for iterative improvement

KEY GAPS

- Insufficient foundational AI understanding

- Regulatory confusion and compliance uncertainties

- Cultural inertia & reluctance to adopt new practices

- Heightened risk of biased AI outcomes

RESULTS

- Increased staff confidence & AI proficiency

- Reduced AI-related risks through informed, compliant usage

- Stronger competitive advantage via responsible AI adoption and innovation

Enhancing Responsible AI in a Tech Firm

CHALLENGE: Augment and operationalise a Responsible AI (RAI) framework that meets regulatory requirements, reduces risk, and strengthens brand trust within a large tech firm

OBJECTIVES

- Align with evolving regulations & tech

- Minimise legal & reputational risks

- Unlock new revenue opportunities & reinforce brand credibility

WHY IT MATTER

- Avoid costly fines & scrutiny through proactive compliance

- Gain a competitive edge by delivering transparent, future-proof AI solutions

- Bolster stakeholder trust & facilitate sustainable innovation

RESULTS

- Streamlined compliance processes with measurable ROI improvements

- Ongoing user confidence & market-leading AI initiative

IMPLEMENTATION SET-UP

- Review & Map: Assess existing RAI governance against recognised standards, identifying key gaps

- Governance & Oversight: Enhance RAI principles & form a dedicated AI Ethics Board to provide strategic guidance

- Frameworks & Tools: Develop fairness & impact testing methodologies; adopt/customise bias detection toolkits

- Transparency & Risk: Establish a tiered transparency reporting process & dynamic risk-tiering approach

- Audits & Improvement: Conduct regular audits to monitor bias, performance, and compliance; feed insights back into product development

- Lifecycle Integration: Embed RAI checks throughout product lifecycles & for emerging technologies

Harnessing the Power of AI Responsibly: Balancing Innovation with Risk

In response to the AI Act, a proposed regulation by the European Union for the safe and ethical development and use of artificial intelligence (AI), organizations can engage in various activities to ensure compliance and ethical application of AI. Working with senior AI and Compliance advisors who are at the forefront of AI supervisory dialogue, we can support the below activities:

The following steps can summarise it:

1

Tailored Frameworks

We guide you through relevant frameworks like the OECD AI principles, national regulations, and industry-specific standards. We don’t just provide templates but help you operationalize them within your organizational structure.

2

Governance Structures

We help establish clear roles, responsibilities, and escalation pathways for AI risk. This may involve setting up AI oversight committees or integrating AI risk into existing risk management structures.

3

Selecting the Right Tools

We assess your needs and recommend the best mix of in-house, open-source, and cloud-based tools for model validation, bias detection, explainability, and ongoing monitoring, taking budget and existing infrastructure into account.

4

Smart Practices and Training

We go beyond theory. We share best practices, case studies, and practical methodologies for embedding AI risk management into your development, deployment, and monitoring processes. We offer general AI awareness training for all relevant employees, along with role-specific deep dives for developers, risk analysts, and business leaders involved in AI projects. Training includes practical exercises to help teams think critically about AI-specific risks (bias, security) as they pertain to their particular products and business lines.

See our RESPONSIBLE AI TRAINING page.

5

Leveraging Existing Resources

Our focus is synergy. We identify how AI risk management can fit into existing risk and compliance processes, avoiding redundant efforts and maximizing the use of current personnel.

6

Governance & Complaince

We help you balance central oversight with distributed accountability, empowering product teams without compromising risk management.

Our Compliance Experts actively engage with stakeholders, including regulatory bodies, customers, and partners, to discuss AI utilization and compliance.

Jen Gennai

Jen Gennai – speaking at re:publica (Berlin – June 2023)

Mastering Responsible AI

Jen Gennai is a leading voice and pioneer in AI Risk Management, Responsible AI, and AI Ethics.

- Founded Responsible Innovation at Google, one of the first institutions worldwide to adopt AI Principles to shape how AI is developed and deployed responsibly.

- Informed governmental and private AI programs on how to manage and implement AI risks.

- Contributed to EU AI Act, UK Safety Principles, G7 Code of Conduct, OECD AI Principles & NIST.

- International Panellist & Speaker (Davos, World Economic Forum, UNESCO, etc.).

More information on Jen Gennai here.

Reach out to Jen: [email protected]

Want to hire

AI Regulation Expert?

Book a call with our experts